Max enrollment: 16

Veteranenstrasse 21

10119 Berlin

These two intensive course units on AI music (Introduction to Terminal Usage and Python Programming & Python and Machine Learning for Audio) will allow you to start from the very basics of programming work your way up to receiving a hands-on training in creating sound through machine learning algorithms, Markov chains, Music Information Retrieval and training other types of machine learning routines on datasets. The course will feature an AI club night with Hexorcismos and Ktonal as well as an opportunity for you to have your own AI music premiered at the Acud Club alongside Ktonal.

AI MUSIC 1: Introduction to Python Programming

This course provides foundational knowledge in both terminal operations and Python programming, especially for musical applications. Initially designed as a preparatory module for the “Machine Learning for Audio” course, it is also suitable for individuals in artistic disciplines seeking to engage with programming, particularly in the context of audio and multimedia applications.

Fundamentals Session, Monday, 30th October, 19:00 – 22:00

Bring yourself up to speed for the AI Music course even as a complete beginner: introduction to Python programming with iPython notebooks. Grasp fundamental concepts such as data types and formats. Learn to read data from different file formats into python. Understand the mechanics of code execution and the interplay between interpreters and object code. Further, navigate Python’s scientific computing infrastructure involving libraries like numpy and scipy. Develop a clear understanding of object-oriented programming, encompassing classes, objects, and methods. Learn how to decipher error messages and find documentation.

AI MUSIC 2: Python and Machine Learning for Audio

This course introduces students to the application of machine learning techniques for audio and music, offering a journey from the basics to the artistic application of machine learning techniques. For students without a background in Programming, the crashcourse “Introduction to Terminal Usage and Python Programming” is meant to bring everybody up to speed with the prerequisite knowledge.. The course starts with introducing Python as a tool to process audio data and progresses into machine learning theory, tracing the evolution of AI, exploring different learning paradigms, and delving into key concepts such as parameters, hyperparameters, and neural networks. The theory is complemented by hands-on work using ‘mimikit’, a versatile tool that embodies the course’s commitment to bridging the gap between the technical and the creative.

Session 1 – Python for Audio Processing, Wednesday, 1st November, 18:00 – 21:00

Understand core concepts of digital audio data (sampling rate, bit depth) and the differences between time and frequency domains in music. Audio Tools & Acoustic Principles: Techniques for loading and plotting waveforms and spectrograms. Look into some basic audio feature extraction methods.

Simple signal processing methods like convolution, filtering, amplitude followers, resampling.

FRIDAY, November 3rd from 21:00

CONCERT 1: Hexorcismus meets Ktonal Acud Club,

Session 2 – Introduction to Machine Learning, Monday, 6th November, 18:00 – 21:00

Evolution of AI and Machine Learning: An historical perspective of AI and machine learning and their relevance in music. Machine Learning Paradigms: Discussion of supervised, unsupervised, and reinforcement learning. Difference between physical models vs blackbox approaches. Linear Regression and Neural Networks: Examination of basic concepts, applications, and the importance of parameters and hyperparameters. Training Concepts: Understanding datasets, batching, optimization, and inference.

Session 3 – Practical Machine Learning with Mimikit, Wednesday, 8th November, 18:00 – 21:00

Understanding Audio Network Architectures: An in-depth look at the structure and functioning of various audio network architectures. Exploring Advanced Networks: Hands-on exploration of Freqnet, Wavenet, SampleRNN, seq2seq, and clustering methodologies. Overview of Music/Audio-Related Projects: Insight into other notable music and audio-related machine learning projects, providing context and application perspectives. Training with Mimikit: A practical approach to training machine learning models on audio datasets using Mimikit. Sound Generation: Guidance on using trained models to generate sounds.

Session 4 – Advanced Machine Learning Techniques and Artistic Applications, Monday, 13th November, 18:00 – 21:00

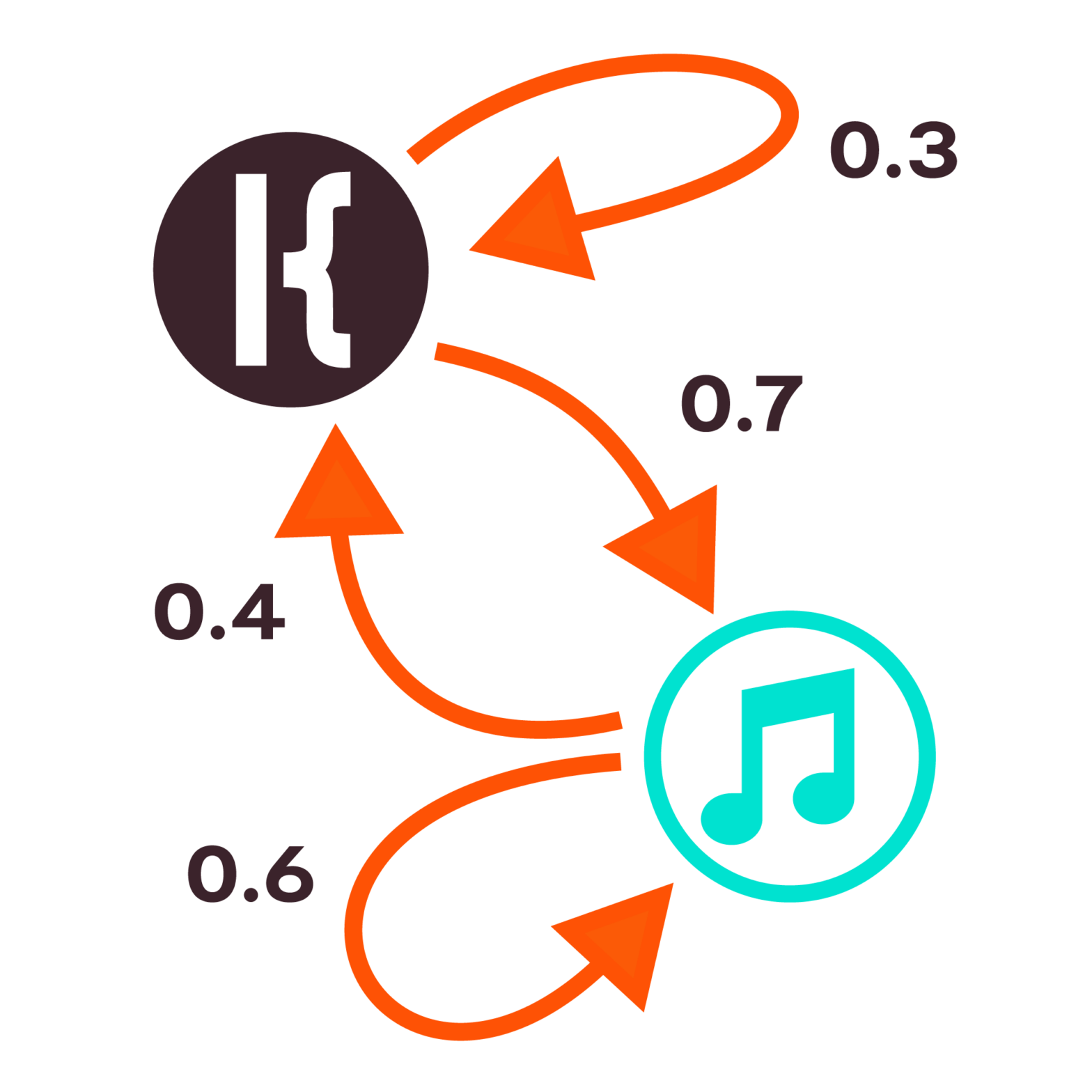

Hands-On Training and Sound Generation: Practical training on audio-datasets with Mimikit and generating sounds using trained models. Model Management:: Techniques for handling checkpoints and refining models for improved performance. Advanced generation methods with Ensemble Generator, combining multiple models by pattern-based switching. Artistic Work, Dataset Design, and Composition: Analysis of the artistic implications of data selection and incorporating machine learning tools in the music composition process.

Session 5 – Latent Sounding: Introduction to Neural Audio SynthesisAI with Hexorcismus AKA Moisés Horta Valenzuela, Wednesday, 15th November, 18:00 – 21:00

The realm of audio synthesis is ever-evolving, and the dawn of neural synthesis has ushered in a new era of possibilities thanks to cutting-edge machine learning techniques.Join us in this hands-on workshop as we navigate the fascinating landscape of neural audio synthesis. Journey through its intriguing history, entwined with the evolution of artificial neural networks, and immerse yourself in real-time experimentation by training machine learning models using unique sound samples.Diving deep into audio synthesis through neural networks, this workshop uncovers the intricacies of a specialized AI domain that focuses on crafting generative sound synthesizers. These synthesizers, grounded in features drawn from original audio samples, have the prowess to not only replicate the nuances of the original dataset but also achieve timbre transfer. This groundbreaking approach enables the transformation of a fresh audio signal to mirror the timbral qualities absorbed by the algorithm.An intriguing aspect we’ll explore is the amalgamation of different sounds, birthing an entirely novel auditory experience. This innovative approach redefines the boundaries of computer music composition, sound design, and sonic art, propelling us from fixed media concepts to a generative perspective on sound.To provide a tangible feel, we’ll be delving into various sound artworks that tactfully employ and experiment with these algorithms. Furthermore, by viewing the dataset as a lens, we will touch upon broader socio-cultural themes.By the end of the session, participants will have a foundational grasp of these avant-garde techniques, empowering them to seamlessly integrate them into their existing sound design, music production, and compositional practices.Our exploration will harness the power of renowned open-source algorithms while reflecting upon their underlying ideologies. Along this journey, we’ll shed light on the diverse artistic implementations and the consequential ethical considerations they introduce to the sound creation process.A computer is a must-have for this workshop. While programming expertise isn’t a prerequisite, it’s a valuable asset.Echoing the ethos of our workshops, we cherish the diversity of our participants, believing that varied backgrounds brew the most innovative ideas. So, if you’re intrigued but uncertain about fitting in, you’re precisely who we’re looking for! Join us and resonate with the future of sound.

Saturday November 18th from 21:00

CONCERT 2: Ktonal and featured works of BeSoS AI Participants +guest, Acud Club

Moisés Horta Valenzuela / 𝔥𝔢𝔵𝔬𝔯𝔠𝔦𝔰𝔪𝔬𝔰 Moisés Horta Valenzuela (1988, he/him) is an autodidact sound artist, creative technologist and electronic musician from Tijuana, México, working in the fields of computer music, Artificial Intelligence and the history and politics of emerging digital technologies. He has received the award of A.I. Newcomer 2023 by the Association of Informatics of Germany.

As 𝔥𝔢𝔵𝔬𝔯𝔠𝔦𝔰𝔪𝔬𝔰 , he crafts an uncanny link between ancient and l through a critical lens in the context of contemporary electronic music and the sonic arts. His work has been presented in Ars Electronica, NeurIPS Machine Learning for creativity and design workshop, MUTEK México, Transart Festival, MUTEK: AI Art Lab Montréal, Elektron Musik Studion, CTM Festival Berlin, KTH: Royal Institute of Technology Stockholm, Intelligent Instruments Lab, Reykjavik, among others.

He is currently working as an AI music technologist, developing new instruments utilizing Generative neural networks, independently organized workshops around creative AI art practices centered around sound and image synthesis and the demystification of neural networks. He is also developing SEMILLA.AI, an interface for interacting with generative neural sound synthesizers through ancient mesoamerican divination practices, and OIR, an online channel for semi-autonomous meta-DJ trained on thousands of hours of visuals and music from global electronic club music and techno. He worked most recently as lead AI technologist for Marianna Simnett’s GORGON, commissioned by LAS Art Foundation, as well as R&D and engineer in AI music for the KORUS AI platform.

KTONAL (https://ktonal.com/) is a group of composers dedicated to AI-powered art with a main focus on music. Founded in October 2020, KTONAL conducts research, writes code, and composes music and audiovisual pieces. Neither an artists collective nor a group of artists, KTONAL is a unique collaborative structure where five Berlin-based composers conceive, experiment and execute works they collectively author. Each KTONAL’s member has many years of experience with electro-acoustic music and intermedial art as well as with more traditional instrumental concert forms. In its first year of collaboration, KTONAL has developed several software packages to enable its artistic production, published sound studies on its social media channels and composed its first Opus “Le Mystere des Voix Neuronales”, which was performed at the AIMC(Artificial Intelligence Music Creativity) Conference in Graz Austria. In addition, a presentation and a discussion on “Artificial intelligence and music composition” were held for students as part of the KiK discussion class at the Hochschule für Musik Hanns Eisler Berlin. Members: Antoine Daurat, Björn Erlach,Roberto Fausti,Genöel von Lilienstern, Dohi Moon.

Antoine Daurat (b.1985, Paris) began his musical education with violin and piano lessons at a young age. After his high school graduation, he prepared for a few years for the Conservatoire’s entrance examination in musicology, but left Paris for Berlin at the age of 21 to pursue composition. He studied instrumental and electroacoustic composition at the Hochschule für Musik Hanns Eisler, working primarily with Hanspeter Kyburz and Wolfgang Heiniger. After several years spent working as a freelance composer and conducting/performing in the contemporary music theatre scene, Antoine Daurat started focusing on software development (AI and web) and is now fullstack developer.

Bjoern Erlach is a composer of computer music and software developer. He started in Cologne, moved to The Hague to study Sonology, and afterwards to Palo Alto to do a PhD at CCRMA. Today he lives in Berlin where he works on projects involving physical realizations of abstracted models of sound production in specific, and electronic music, algorithmic composition and sound installations in general.

Roberto Fausti grew up in Rome. In 2005 he received his piano diploma at the Municipal Conservatory of Frosinone, where he studied with Gilda Buttá. He also studied composition there. From 2009 to 2015 he continued his composition studies at the Hochschule für Musik Hanns Eisler with Prof. Hanspeter Kyburz and Prof. Wolfgang Heiniger. He has composed for the ensemble Zafraan (Berlin, 2015 UA Oktätt), for the ensemble Accroche Note (Strasbourg, 2015 UA trio) and for the ensemble ascolta (Berlin, 2016 UA Caramelle). Berlin based composer

Genoël von Lilienstern is working across the fields of instrumental composition, music-theatre and sound installation. His work explores connections between audio- visual collage techniques, contextualizations of music and structural processes. His compositions have been performed by ensembles such as Ensemble Intercontemporain, SWR Orchestra and Stuttgarter Vocalsolisten.

Dohi Moon is a Berlin-based Korean composer, pianist, and kinetic sound installation artist. Her music has been commissioned and performed throughout the United States, Europe, and Asia. She studied piano performance at Seoul National University, South Korea and obtained her doctoral degree in music composition at Michigan State University, U.S.A. Before moving to Berlin, she was a visiting scholar at the CCRMA (Center for Computer Research in Music and Acoustics) at Stanford University.

Bookings

Bookings are closed for this event.